Imagery in articles can be a wonderful communication device when used correctly. One issue that still plagues SEO content teams is how to properly handle text in images. Historically, text within an image is trapped and the contextual message is lost to search engines that didn’t have the processing power to decode (they still likely don’t outside of image search or are limited).

For me, it’s been best practice to either limit the amount of text in images or make sure there is sufficient alt text and caption support. That way search engines can still benefit and process the text found within the visual image.

Over time, article libraries get really large and it’s difficult to determine just what level of a problem this is for you and your team. In this Python SEO guide, I’ll show you step by step how easy it is to set up a script to crawl your website and uncover issues with text in the images you serve. Then you can decide if you need to adjust the alt text, include a caption, or redo the image without text and instead include the text in the copy.

Table of Contents

Requirements and Assumptions

- Python 3 is installed and basic Python syntax is understood.

- Access to a Linux installation (I recommend Ubuntu) or Google Colab.

- Crawl CSV with URLs with URL column titled “urls”.

- I would be careful running this on a site with tens of thousands of images or more.

- Careful with copying the code as indents are not always preserved well.

Install Python Modules

If you don’t already have Google Tesseract installed on your system let’s do that first. Remember, if you are using Google Colab, add an exclamation mark at the beginning.

sudo apt install tesseract-ocr -y

Now that we have the backend program installed, we need to install the pytesseract Python module that acts as a wrapper for the program we installed above.

pip3 install pytesseract

Import Python Modules

- Image: helps to open and read the image file

- pytesseract: the parent module for processes the image for text recognition

- image_to_string: the actual class that does the text recognition

- io: converts image into a readable state for tesseract

- requests: makes the HTTP calls for each URL and each image

- pandas: for storing the results in a dataframe

- BeautifulSoup: for scraping the images from each page

- pathlib: to easily identify the image type by the suffix

from PIL import Image import pytesseract from pytesseract import image_to_string import io import requests import pandas as pd from bs4 import BeautifulSoup import pathlib

Setup Lists, Dataframe and Accepted Formats

Next, we set up our lists where we will store our data before adding it to the dataframe. The empty dataframe is also created along with a short list of acceptable image types we can allow for processing.

alt_text_list = [] img_text_list = [] urls_list = [] img_list = [] df1 = pd.DataFrame(columns = ['url', 'img url', 'alt text', 'image text']) img_formats = [".jpg",".jpeg",".gif",".png",".webp"]

Import URL Data from CSV

Now is the time we import our URLs from the crawl data and convert the dataframe column to a list to easily loop through.

df = pd.read_csv("urls.csv")

urls = df["urls"].tolist()

Process URLs for Images

Next, we loop through each URL in our list to:

- Collected all the image HTML tags on that page.

- If an image tag lacks a src attribute, mark it in the src list as empty. If not, add the src path to the list.

- If an image tag lacks an alt attribute, mark it in the alt list as empty. If not, add the alt text to the list.

for y in urls:

response = requests.get(y)

soup = BeautifulSoup(response.text, 'html.parser')

img_tags = soup.find_all('img')

img_srcs = [img['src'] if img.has_attr('src') else '-' for img in img_tags]

img_alts = [img['alt'] if img.has_attr('alt') else '-' for img in img_tags]

Process Images Found

Now that we have two lists, one containing the image src paths and the other containing the alt text, we loop through the img_srcs list for the URL we just crawled, and for each image, in the img_srcs list, we add it to another final list along with adding the URL to a URL list to connect the image with a URL.

for count, x in enumerate(img_srcs):

img_list.append(x)

urls_list.append(y)

The next chuck is fairly large, but because there is a lot going on, I didn’t want to split it up.

Before we process the image for text recognition we want to detect if the image is available to be processed. If it has a dash as a value as we set earlier if not a valid src then simply output the image as not a valid src. If it is valid, then we use the pathlib module to test the image src against our list of acceptable image formats. If that passes then we open the image using the Image and io modules and then pass it to the pytesseract module for text recognition. The rest of the script chuck is just a series of if/then/else depending on if the image is a supported format, has a source, and has an alt. Depending on how those shake out, various results will be appended to our master lists.

Perform Image to Text Recognition

if x != '-':

print(pathlib.Path(x).suffix)

if pathlib.Path(x).suffix in img_formats:

response = requests.get(x)

img = Image.open(io.BytesIO(response.content))

text = pytesseract.image_to_string(img)

if img_alts[count] != '-':

alt_text_list.append(img_alts[count])

else:

alt_text_list.append("no alt")

img_text_list.append(text)

else:

img_text_list.append("not supported")

if img_alts[count] != '-':

alt_text_list.append(img_alts[count])

else:

alt_text_list.append("no alt")

else:

img_text_list.append("no src")

if img_alts[count] != '-':

alt_text_list.append(img_alts[count])

else:

alt_text_list.append("no alt")

The last step is to append the lists to the empty dataframe and display

df1['url'] = urls_list df1['img url'] = img_list df1['alt text'] = alt_text_list df1['image text'] = img_text_list df1

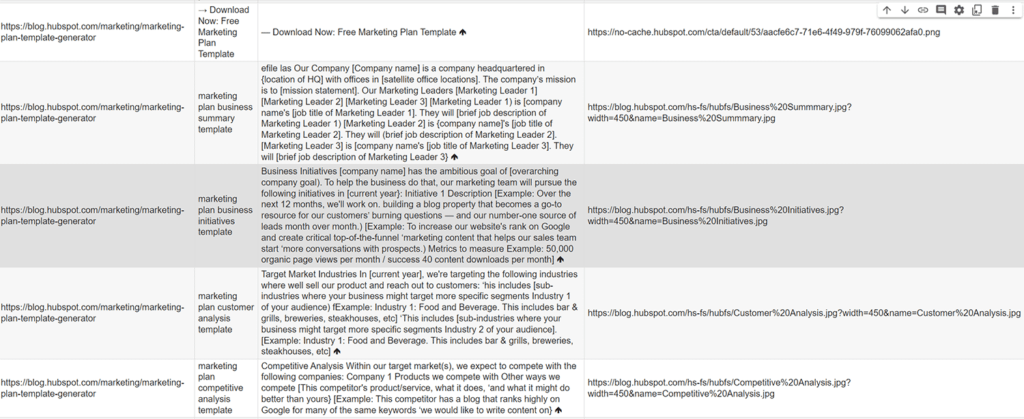

Example Output

URL | Alt Text | Img Text | Image Src

Conclusion

Remember to try and make my code even more efficient and extend it in ways I never thought of! I can think of a few ways we can extend this script:

- Detect copy around the image such as captions.

- Analyze the alt text vs the in-image text

- Exclude previously processed images (duplicates)

- Examine and compare EXIF data

Now get out there and try it out! Follow me on Twitter and let me know your Python SEO applications and ideas!

Tesseract FAQ

How can Python and Tesseract be used to detect text in images in bulk for SEO analysis?

Python scripts can be developed to leverage the Tesseract OCR (Optical Character Recognition) engine, enabling the bulk detection of text within images for SEO purposes.

Which Python libraries are commonly used for detecting text in images in bulk with Tesseract?

Commonly used Python libraries for this task include pytesseract for Tesseract integration, PIL (Pillow) for image processing, and pandas for data manipulation.

What specific steps are involved in using Python and Tesseract for bulk text detection in images?

The process includes fetching a collection of images, applying Tesseract OCR to extract text, preprocessing the text data, and using Python for further analysis and insights for SEO.

Are there any considerations or limitations when using Python for bulk text detection in images with Tesseract?

Consider the quality and clarity of the images, potential errors in OCR, and the need for a clear understanding of the goals and criteria for text extraction. Regular updates to the analysis may be necessary.

Where can I find examples and documentation for detecting text in images in bulk with Python and Tesseract?

Explore online tutorials, documentation for Tesseract, and resources specific to OCR and image processing for practical examples and detailed guides on detecting text in images using Python and Tesseract for SEO analysis.

- Build a Custom Named Entity Visualizer with Google NLP - June 19, 2024

- Storing CrUX CWV Data for URLs Using Python for SEOs - January 20, 2024

- Scraping YouTube Video Page Metadata with Python for SEO - January 4, 2024