Detect Generic Anchor Text in Links for SEO using Python

Optimizing anchor text for internal links has long been a core SEO practice. Google documents anchor text in its SEO guidelines. Anchor text gives users and search engines contextual signals about the linked page’s topic. It’s an opportunity to tell both Google and users what the next page is about and why it is relevant.

Many internal links use non-descriptive anchors like “click here”, “learn more”, or “link”. These are missed opportunities that should be addressed. This Python SEO guide shows a framework for detecting generic anchor text across many pages and outputs a table ready for optimization.

Table of Contents

Requirements and Assumptions

- Python 3 is installed, and you understand basic Python syntax.

- Access to a Linux installation (Ubuntu recommended) or Google Colab.

- A crawl CSV named urls.csv with a column titled “url” containing the URLs.

- An understanding of Regular Expressions.

- Be careful copying the code; indentation may not be preserved.

Import Python Modules

Start by importing the modules required for this script.

- bs4: An HTML parser used to retrieve links from a page

- re: For regular expressions used to detect words in anchor text

- requests: Performs the HTTP requests for each URL and image

- pandas: For storing results in a DataFrame

from bs4 import BeautifulSoup import re import pandas as pd import requests

Write RegEx for Text Matching

Now we’ll construct our regex list, which contains the patterns to look for in anchor text. You can add more patterns as needed. I included ‘www’ and ‘http’ to surface cases where the anchor text is the URL. Here’s an explanation of the components I used.

- (.*)? optionally matches any content before the target word.

- (?<![a-zA-Z]) is a negative-lookbehind. It prevents a match when a letter immediately precedes the target word, avoiding matches inside other words.

- (?![a-zA-Z]) is a negative-lookahead. It prevents a match when a letter immediately follows the target word, avoiding matches inside other words.

- (.*)? optionally matches any content after the target word.

generic_anchors = [ '(.*)?(?<![a-z])here(?![a-z])(.*)?', '(.*)?(?<![a-z])follow(?![a-z])(.*)?', '(.*)?(?<![a-z])click(?![a-z])(.*)?', '(.*)?(?<![a-z])learn(?![a-z])(.*)?', '(.*)?(?<![a-z])read(?![a-z])(.*)?', '(.*)?(?<![a-z])more(?![a-z])(.*)?', '(.*)?(?<![a-z])go(?![a-z])(.*)?', '(.*)?(?<![a-z])link(?![a-z])(.*)?', '(.*)?(?<![a-z])watch(?![a-z])(.*)?', '(.*)?(?<![a-z])find(?![a-z])(.*)?', '(.*)?(?<![a-z])webpage(?![a-z])(.*)?', '(.*)?(?<![a-z])website(?![a-z])(.*)?', '(.*)?(?<![a-z])page(?![a-z])(.*)?', '(.*)?(?<![a-z])www(?![a-z])(.*)?', '(.*)?(?<![a-z])http(?![a-z])(.*)?' ]

Data Import and Storage

Next, import the URL list and convert the “url” column to a Python list for looping. Then create an empty DataFrame with three columns to hold the results. Finally, create a few empty lists for temporary storage before transferring data into the DataFrame.

df = pd.read_csv('urls.csv')

url_list = df['url'].tolist()

df1 = pd.DataFrame(columns = ['url', 'internal link', 'anchor text'])

url = []

internal_link = []

anchor_text = []

Process the URLs

Now loop through the URL list from the crawl CSV. For each URL, fetch the page HTML using get() and create a BeautifulSoup object from the HTML. Remove several HTML tags with decompose() to exclude templated areas like navigation. You can add other block-level tags to exclude. Finally, find all anchor tags and store them in the links object.

for x in url_list:

html = requests.get(x)

soup = BeautifulSoup(html.text, 'html.parser')

for tag in soup(["header","footer","aside","nav","script"]):

tag.decompose()

links = soup.findAll('a')

Clean the Data

HTML often contains links with empty anchor text or None-type links. Use a list comprehension to filter these out to avoid errors in the next block. Then combine the regex patterns using join() to create a single large regex string, which you’ll use in the following code block.

links = [x for x in links if x != None]

combined = "(" + ")|(".join(generic_anchors) + ")"

Process each link

I’ll describe the next code block line by line:

- Loop through each link in the links object.

- If the anchor text length is greater than 0 and less than 20, process it. Adjust these thresholds as needed.

- If the combined regex matches the anchor text (after stripping whitespace and ignoring case), proceed.

- Append the source page URL, the link’s href, and the anchor text to the lists for storage.

- Repeat until all links are processed.

for y in links:

if len(y.text) != 0 and len(y.text) < 20:

if re.match(combined, y.text.strip(), re.IGNORECASE):

url.append(x)

internal_link.append(y["href"])

anchor_text.append(y.text)

Populate the dataframe

Finally, assign the lists to the DataFrame columns and display the DataFrame.

df1["url"] = url df1["internal link"] = internal_link df1["anchor text"] = anchor_text df1

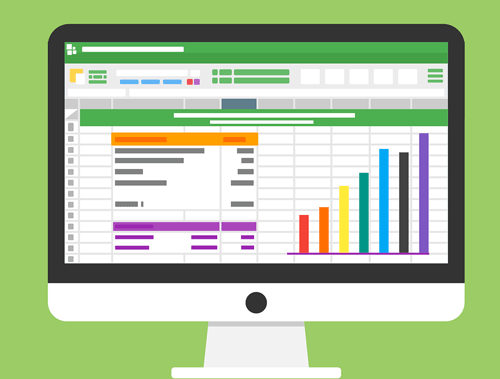

Sample Output

Conclusion

That’s it — you now have a framework to analyze internal links for generic anchor text. Adjust the regex, parameters, and the list of generic words as needed. Anchor text is important for communicating the topical relevance of the linked page: be descriptive and include keywords, but keep anchor text natural and user-friendly.

Try to make my code more efficient and extend it in ways I haven’t thought of! Now try it out! Follow me on Twitter and share your SEO applications and ideas for internal link analysis!

Anchor Text FAQ

How can Python be employed to detect generic anchor text in links for SEO analysis?

Python scripts can analyze anchor text to identify generic terms or patterns used in links.

Which Python libraries are commonly used for detecting generic anchor text in links?

Commonly used Python libraries for this task include beautifulsoup for HTML parsing, nltk for natural language processing, and pandas for data manipulation.

What specific steps are involved in using Python to detect generic anchor text in links for SEO?

Steps include fetching page data, preprocessing anchor text, applying NLP or pattern matching to identify generic terms, and analyzing results with Python.

Are there any considerations or limitations when using Python for detecting generic anchor text in links?

Consider the diversity of anchor text, the choice of features for detection, and the need for a clear understanding of the goals and criteria for identifying generic terms. Regular updates to the analysis may be necessary.

Where can I find examples and documentation for detecting generic anchor text in links with Python?

Explore online tutorials, library documentation, and NLP resources for practical examples and detailed guides on detecting generic anchor text using Python for SEO analysis.

- Evaluate Subreddit Posts in Bulk Using GPT4 Prompting - December 12, 2024

- Calculate Similarity Between Article Elements Using spaCy - November 13, 2024

- Audit URLs for SEO Using ahrefs Backlink API Data - November 11, 2024